©2026 ZenABM - All Rights Reserved.

AI is changing ABM faster than most marketing teams are adapting, and the companies getting the most from LinkedIn ABM right now are not the ones with the biggest budgets but the ones using AI to build better target account lists, score accounts more accurately, and detect intent signals faster than any human team could manually.

This post covers the key lessons from Katya Tarapovskaia’s session at the ZenABM ABM Bootcamp 2026, where she broke down practical AI ABM workflows from segmentation to signal detection to automated account scoring.

You can watch the full session recording on YouTube here.

Short on time?

Here’s a quick rundown of the guide:

The honest answer is that AI is genuinely transformative in some parts of ABM and almost useless in others.

Getting this distinction right matters because teams that over-rely on AI for the wrong things end up with beautiful automated workflows producing bad outputs.

AI helps real value in ABM in ways like the following:

Enriching 10,000 accounts and scoring each one against your ICP criteria manually would take weeks.

AI classification with GPT-4o mini does it in hours at near-perfect accuracy for less than $1 for thousands of accounts, which makes manual account review essentially indefensible as a use of your team’s time.

Your CRM has company name, size, and industry, but AI can analyze everything else, the company’s website, their LinkedIn posts, their job listings, and their press releases, and derive signals like “actively expanding their data infrastructure team” or “recently switched CRM” that no data provider sells directly.

These derived signals are where the real competitive advantage lives because your competitors cannot buy them off the shelf either.

Feed AI your full history of won and lost deals and let it identify the patterns your team has been missing.

These are questions that are nearly impossible to answer reliably by looking at individual deals but trivial for AI to surface from a dataset of hundreds.

When a target account hits your engagement threshold, AI can pull together everything you know about that account, ad engagement history, website visits, hiring signals, and LinkedIn activity, and write a one-paragraph brief for your BDR before they reach out.

This means your BDR walks into the conversation with context rather than starting cold.

For now, AI ain’t worth it for the tasks like the ones below:

AI can score accounts reliably, but deciding which accounts your CEO should personally reach out to still requires human judgment about relationship context and strategic fit that no model can fully capture.

AI can generate ad copy variants quickly and cheaply, but the strategic decision about what message to lead with for a specific ICP persona still requires a human who understands that persona deeply enough to know what will resonate versus what will fall flat.

No AI model reliably predicts exactly when a deal is ready to close from marketing signal data alone, because the variables that determine timing, internal budget cycles, executive sponsor availability, and competing priorities, are rarely visible in the data your marketing stack collects.

The most impactful AI ABM workflow is also the most foundational: automated ICP scoring of your target account list.

The process Katya recommends:

Start with your existing customers, or if you have fewer than 30, a manually curated set of ideal target accounts. These are your ground truth for what good looks like, and the quality of everything downstream depends on getting this right.

For each truth list account, enrich industry, sub-industry, company size, revenue, tech stack, growth stage, recent funding, hiring patterns, website traffic, and LinkedIn follower count.

The more data points the AI has to work with, the more reliable its scoring becomes.

Describe what makes a good-fit account based on the patterns in your truth list.

This prompt becomes your ICP scoring engine, and the specificity of the prompt is the single biggest determinant of scoring accuracy.

Execute the prompt in Clay against your full prospect pool. Each account gets an ICP fit score, typically 1 to 10 or Tier 1, Tier 2, or Tier 3, plus a brief explanation of why it scored that way, which gives you both the classification and the reasoning behind it.

Only Tier 1 and Tier 2 accounts go into your paid ABM campaigns, while Tier 3 goes into a separate nurture track at a much lower budget.

This ensures your LinkedIn ad spend concentrates on accounts that actually look like your best customers.

The result is that your LinkedIn ad budget targets the right accounts, and LinkedIn’s algorithm has a better signal to optimize toward.

This is the fix for the “bad list” problem that Bilal Ahmad described in the list-building session, where a bad list trains LinkedIn’s algorithm on the wrong companies and the damage compounds with every dollar spent.

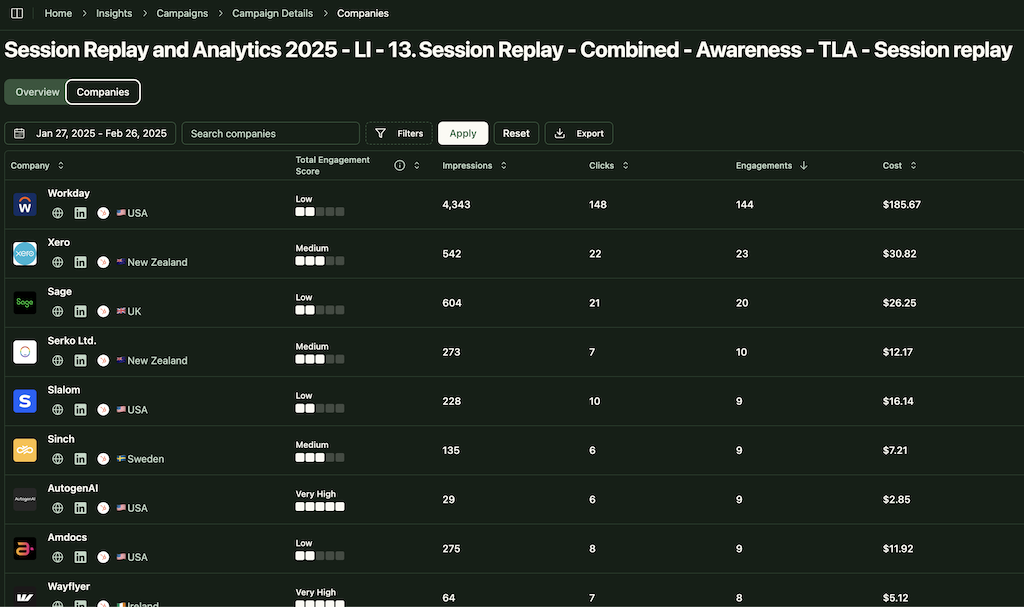

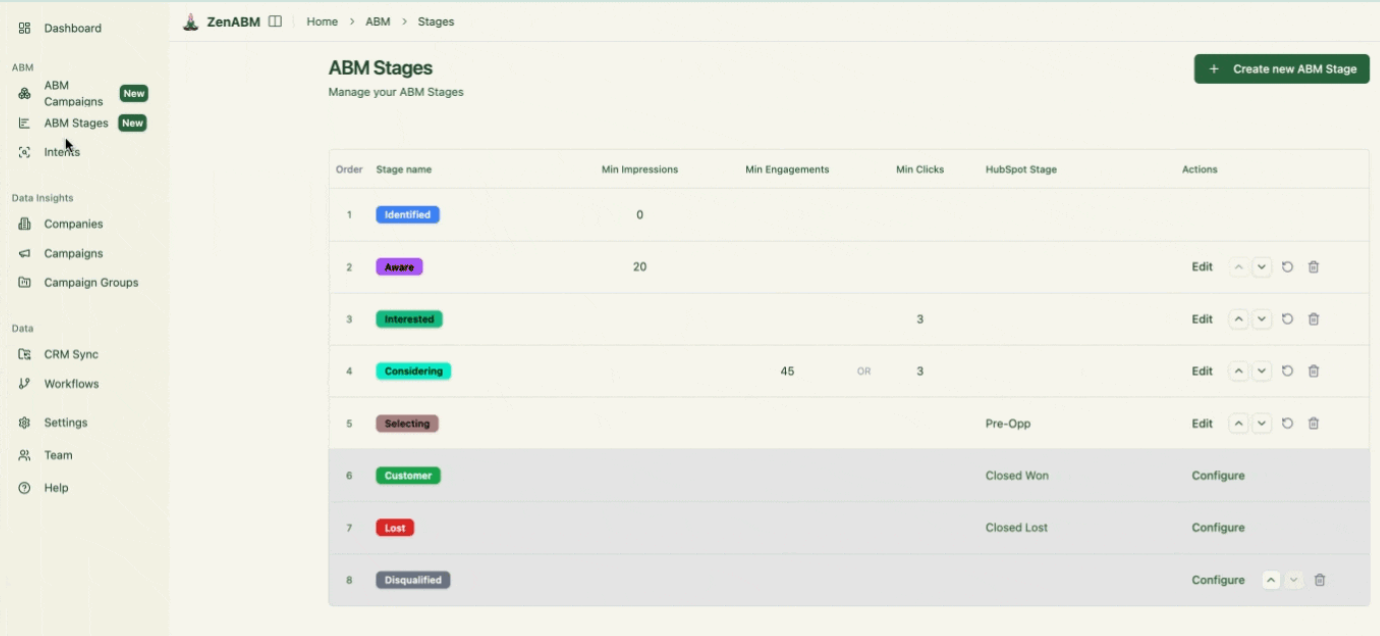

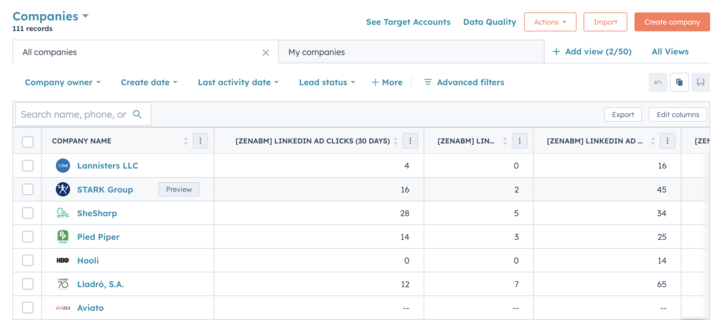

ZenABM becomes a strong feedback layer after the launch.

Its company-level engagement reporting, account-stage progression, and CRM sync help you validate whether your Tier 1 and Tier 2 accounts are actually the ones responding, which makes it easier to refine your AI scoring model over time instead of treating the first scoring pass as final.

Third-party intent data, the kind that 6sense, Bombora, and similar platforms sell, is useful but incomplete.

It tells you that someone at a company searched for a category of solution, but it does not tell you who, why, or how serious the intent is.

AI can build a richer picture of intent by combining multiple data sources that no single provider covers:

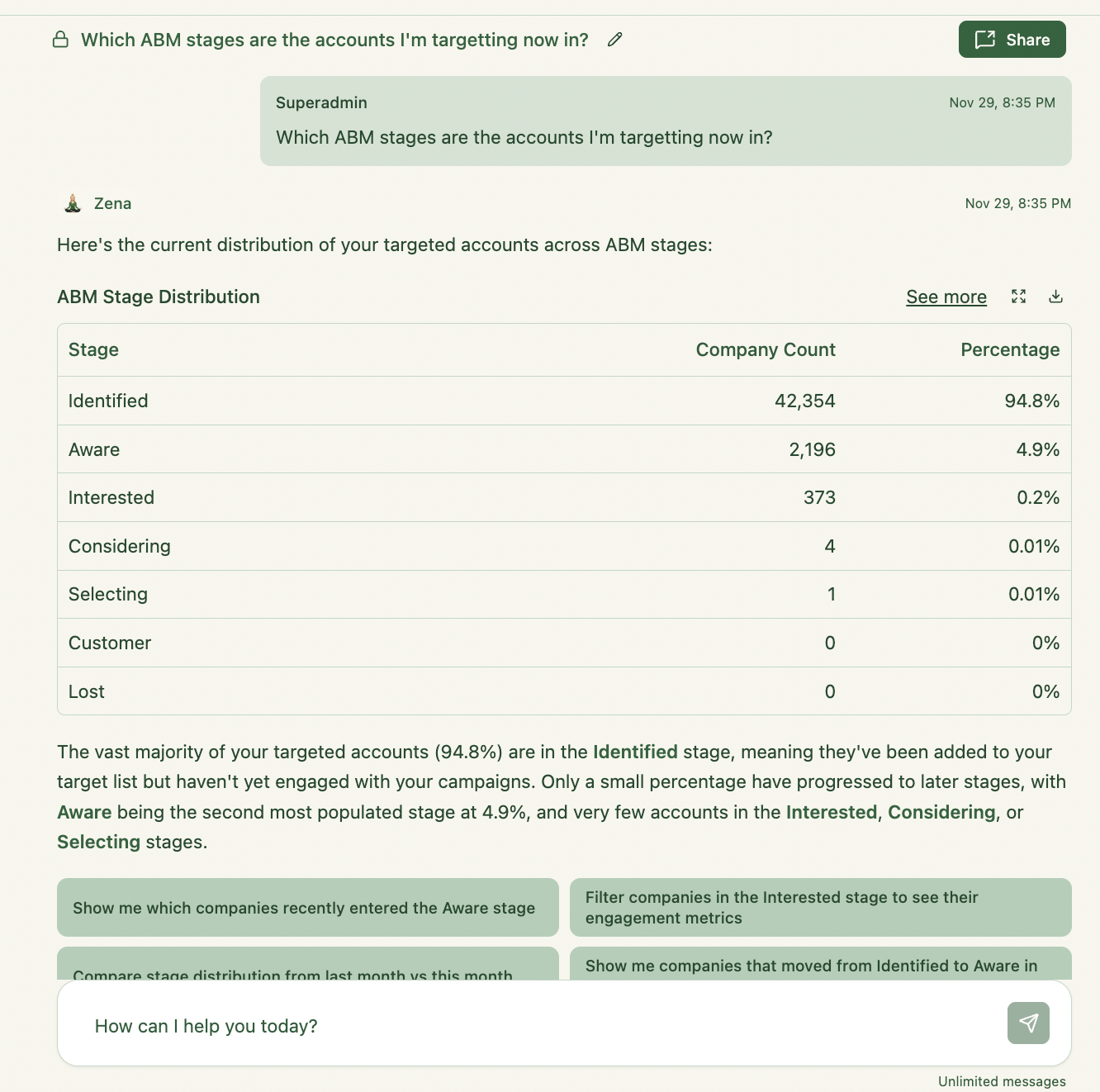

From ZenABM: Which specific campaigns and ad types is this account engaging with?

This tells you which pain point or use case they are researching, and because it is first-party data from your own campaigns, it is far more reliable than third-party keyword surge signals.

More specifically, ZenABM gives you company-level engagement on LinkedIn ads, engagement scoring, qualitative intent by campaign message, and account-level campaign history.

That means AI is not just reading “this company clicked an ad” but “this company is engaging with this specific theme and appears to be moving toward a stronger buying stage.”

Which pages did they visit, how many times, and from which city?

High-intent pages, pricing, demo, competitor comparison, and specific product pages, are the strongest signals, and the recency and frequency of visits tells you how actively the account is evaluating right now.

Is this company actively hiring for roles related to the problem your product solves?

A company hiring 5 data engineers is likely investing in data infrastructure, which is directly relevant if you sell data tools. Hiring signals are particularly valuable because they indicate budget commitment rather than just curiosity.

Are their executives posting about the problem your product solves?

A CTO posting about data quality challenges is a warm signal for a data quality tool because it means the problem is top-of-mind at the leadership level, not buried in a technical team’s backlog.

Recent funding rounds often precede technology investment decisions because the capital is earmarked for scaling infrastructure.

New leadership often means new vendor evaluations because incoming executives tend to re-evaluate the tools their predecessors chose.

AI combines these signals and produces an intent score that is substantially more reliable than any single data source.

The key principle is that signals from your first-party data, ad engagement and website visits, always take precedence over third-party signals, because an account clicking your ads and visiting your pricing page outweighs 20 third-party intent data points from 6sense.

For more on how this connects to your CRM, see account scoring and engagement signals for ABM.

One of the highest-leverage AI workflows for ABM is also one of the least used: feeding AI your complete closed-won and closed-lost deal history and asking it to find the patterns.

Most ABM teams build their ICP based on intuition and a few memorable wins, but AI can systematically analyze hundreds of deals and surface patterns that are invisible at the individual deal level:

This analysis should feed directly into your target account list criteria, your ICP scoring model, and your ad messaging.

If 70% of your best customers are companies running Apache Iceberg for data storage, that is both a targeting criterion, find more companies using Iceberg, and a messaging angle, speak directly to Iceberg users’ specific pain points.

Katya recommends running this analysis quarterly and updating your ICP scoring model each time, because your best customers in Q4 2025 may look different from your best customers in Q2 2026 as your product evolves and your market position changes.

ZenABM can strengthen this loop because it gives you a cleaner record of which accounts engaged with LinkedIn campaigns before deals opened, how they moved through ABM stages, and which messages resonated most.

That makes your closed-won and closed-lost analysis more useful for updating both targeting and messaging.

The good news is that you do not need enterprise-level AI tools to run these workflows.

The stack Katya recommends:

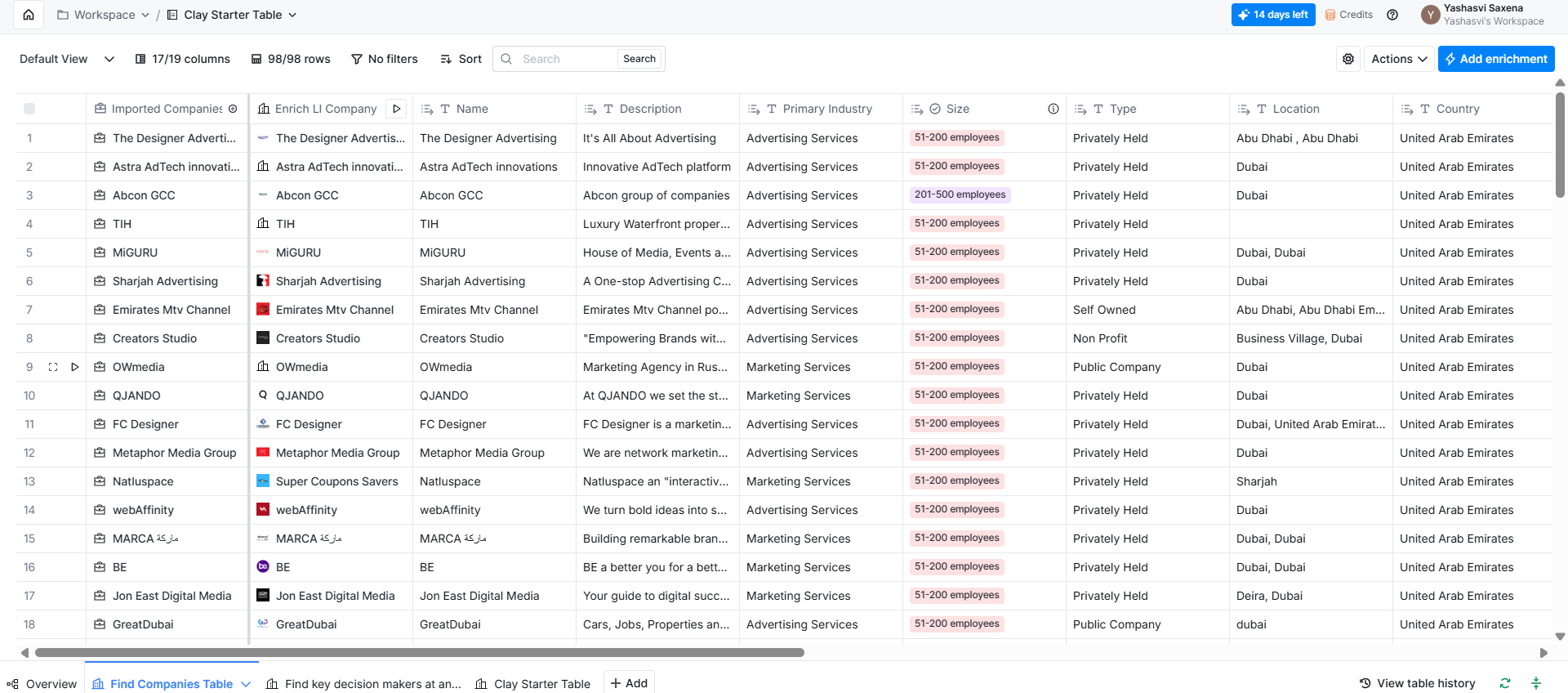

The orchestration layer for enrichment and AI classification.

It connects to 50 or more data sources, runs AI prompts against enriched data, and outputs scored account lists to wherever they need to go.

For more on building target account lists with Clay specifically, see Clay for target account list building.

The AI model for classification.

Fast, cheap, and accurate enough for ICP scoring at scale, and more expensive models are not significantly better for this particular use case because the task is classification rather than creative generation.

The source of first-party LinkedIn ad engagement data, specifically, which accounts are engaging with which campaigns.

This is the highest-quality intent signal in your stack because it comes directly from your own campaigns rather than being inferred from third-party browsing behavior.

More specifically, ZenABM gives teams company-level LinkedIn ad engagement, campaign-specific context, account scoring, ABM stages, CRM sync, ABM campaign performance and revenue attribution dashboards, job-title analytics, webhook automation, and more.

That makes it easier for AI workflows to start from live account behavior instead of static snapshots or generic intent scores.

In fact, ZenABM provides its own AI analytics agent (Zena AI) that answers all campaign performance and attribution-related questions in natural language, so you don’t have to bury your face into complicated dashboards.

Base company and contact data. Use these as inputs to Clay rather than as the primary tool for your AI workflows, because their value is in raw data provision, not in the classification and routing that Clay handles.

Where your AI-scored accounts, stage data, and signal summaries ultimately live.

AI workflows that do not push data to your CRM create parallel systems that sales will ignore, which defeats the entire purpose of running the workflow in the first place.

Make, Zapier and n8n are some tools that can help you knit all your tools together into an automated stack.

Humantic AI provides personality profiles for authentic contact-level personalization for account-based marketing.

It tells you what to write to the lead in an email, when to send them a LinkedIn DM outreach, etc.

The biggest takeaway from this article is that AI works best in ABM when it is used to strengthen core processes, not to replace strategic thinking. It can score accounts faster, combine fragmented signals more intelligently, and surface patterns your team would miss manually, but it still depends on good source data, clear ICP logic, and human judgment where context matters most.

That is also why first-party signal quality matters so much.

ZenABM helps make these AI workflows more useful by supplying company-level LinkedIn engagement, account-stage movement, campaign-specific context, CRM sync, and webhook-ready signals that AI can actually act on.

If you want your AI ABM workflows to be grounded in real buyer behavior instead of generic assumptions, ZenABM is a strong layer to add.

Try ZenABM for free (37-day free trial) or book a demo now to know more!

Some common questions about AI ABM workflows and their answers:

AI is making ABM more precise and less labor-intensive across three main areas: automated ICP scoring at scale, replacing manual account review; derived signal detection from multiple data sources, replacing guesswork about intent, and closed-won pattern analysis, replacing intuition-based ICP definitions.

The fundamentals of ABM have not changed; AI just executes them faster and more accurately than a human team could do manually.

Clay for enrichment and AI classification, GPT-4o mini or Claude Haiku for the classification model, ZenABM for first-party LinkedIn engagement signals, and your CRM, HubSpot or Salesforce, as the destination for all AI-generated data.

You do not need enterprise AI tools because the models available through Clay’s AI column are sufficient for most ABM classification tasks.

With a well-constructed prompt and good training data, your existing customers as the truth list, AI classification achieves 90 to 95% accuracy for ICP fit scoring.

The main sources of error are vague prompts that do not give the model enough specificity to differentiate, inconsistent training data where your customer base is too diverse to produce clear patterns, and asking the model to score on criteria that are not detectable from publicly available information about a company.

Use AI to score your target account list before uploading it to LinkedIn Campaign Manager, then remove low-fit accounts, Tier 3, from your paid campaigns entirely and upload only Tier 1 and Tier 2 accounts to your LinkedIn matched audience.