©2026 ZenABM - All Rights Reserved.

Over 16 months, Emilia Korczynska (VP of Marketing at Userpilot) ran a 1:Many ABM program on LinkedIn targeting 26,315 accounts.

It generated well over $5M in pipeline from 10x less ad spend, at $10.79 in pipeline per dollar spent, and over 2x ROAS in closed-won revenue.

The team kept increasing the budget from the original $20k to over $80k per month, so this is ABM at genuine scale.

The program ran on LinkedIn only, with no display ads, no 6sense, and no $100K/year ABM platform.

The stack was LinkedIn Campaign Manager, ZenABM for account scoring and attribution, HubSpot Marketing for CRM workflows (moving companies between campaigns such as COLD and WARM, and cold to open deals), Smartlead and Heyreach for follow-up outreach to interested accounts, and Clay for list building.

Total tool cost outside of ad spend: under $2,000/month.

This post shares what Emilia learned about running ABM at that scale, targeting thousands of accounts rather than dozens, without losing the personalization that makes ABM work.

The biggest risk with 1:Many ABM is not the targeting.

It is that you end up running what is effectively broad paid social with a company list filter, producing generic impressions that change nothing.

What you will learn:

Short on time?

Here’s a quick rundown:

There are three types of ABM: 1:1 (hyper-personalized to individual accounts), 1:Few (personalized to segments of 25 to 200 accounts), and 1:Many (shared creative targeted at 200 to 5,000+ accounts via company lists).

Emilia Korczynska has the most experience with 1:Many, and recommends it as the starting point for nearly every team for three reasons.

With 1:1 ABM, you are running ads to maybe 10 to 25 accounts, which is not enough data to learn which creative works, which personas engage, or which messages resonate.

You are making decisions based on anecdotes rather than patterns.

With 1:Many targeting thousands of accounts, you get enough engagement data to identify real patterns within weeks.

As described in the complete ABM on LinkedIn guide, Userpilot’s 1:Many program was designed to identify accounts with intent from the broader SAM and surface them for more personalized campaigns and outreach.

You start wide, measure engagement, and then narrow.

The accounts that show high engagement in your 1:Many layer become candidates for 1:Few or 1:1 treatment.

LinkedIn’s CPMs are high. If you spread a $10K/month budget across 10 accounts in a 1:1 program, you are overpaying for frequency on a tiny audience.

If you target 3,000 accounts in a 1:Many program, the same budget buys you meaningful reach across a large enough audience that LinkedIn’s algorithm can optimize delivery efficiently.

As Philip Ilic (LinkedIn ads specialist) put it in his post:

I always start with list-based targeting when running LinkedIn Ads. The fact that we can literally upload a list of companies into Campaign Manager and only show ads to those exact companies is still wildly underrated.

The single most common mistake in ABM at scale is budget dilution, which happens when you launch too many ads with too little budget so none of them get enough impressions to learn or optimize.

Here is the math the team used, based on real performance data from the program.

Work backwards from your revenue goal. If you want to close $1M in ARR from ABM with a $50K ACV, 25% close rate, and 75% qualification rate: $1,000,000 divided by $50,000 ACV equals 20 deals needed.

Working back through stage conversion rates gives you approximately 3,367 accounts to target.

These stage conversion benchmarks (55% become aware, 32% become interested, 18% reach considering) are based on Kyle Poyar’s ABX benchmarks from Growth Unhinged, which the team adapted for this program. They are a starting point and your numbers will vary.

There are two ways to calculate it. Method 1 is CPM-based: if you need 107 demos with a 1% landing page conversion rate and 0.4% CTR, you need approximately 2,675,000 impressions, and at $55 CPM that comes to $147,125, with a 15 to 20% margin of error added on top.

Method 2 is CPC-based: if your cost per conversion (demo) is $1,100 and you need 107 demos, budget is approximately $117,700.

Use the ZenABM ABM Budget Calculator to run your specific numbers.

This is the constraint most teams ignore.

The rule is to target 3 to 4 clicks per ad per day, and at an average $8 CPC, each ad needs $25 to $32 per day to gather enough data.

The formula is: monthly budget divided by 30, divided by ($8 CPC x 4 clicks), which equals maximum effective ads.

With $10,000/month that works out to approximately 10 ads, enough for one persona in one campaign layer. Two personas require $20K/month and three personas require $30K.

This math is non-negotiable, and it is why budget determines ad count rather than ambition.

The “without losing personalization” part of ABM at scale does not mean creating custom ads for each account, because that does not scale. Instead, personalization at the 1:Many level happens through three mechanisms.

Instead of running one giant campaign for all accounts, segment campaigns by the job-to-be-done or pain point you are addressing.

In Userpilot’s program, the team ran 12 intent-based campaign groups, each targeting the same account list but with different messaging themes: competitor displacement, product education, use-case specific content, and others.

This means an account that engages with the “competitor switching” campaign group gets tagged with that intent in ZenABM.

When a BDR later reaches out, they know this company is interested in switching from a competitor rather than just “interested in our product” generically, and that distinction is what makes outreach feel targeted rather than spray-and-pray.

LinkedIn lets you layer job title, seniority, and function filters on top of your company list.

Within each intent campaign, you create separate ad sets for different personas, such as PM/UX, CXO, Marketing, and CS, so each persona sees creative that speaks to their specific pain points and language.

As Philip Ilic noted:

The most effective thought leader ads are niche-specific posts to niche-specific audiences. Posts that are too high-level or too general might do well organically, but with paid, I would get more specific.”

This applies directly to ABM at scale, where your 1:Many creative should still be persona-specific even if it is not account-specific.

Accounts in the COLD layer see awareness content such as thought leadership, industry insights, and problem framing.

Accounts that move to the WARM layer based on engagement scores see solution content, including product comparisons, case studies, and demo invitations.

The content changes based on where the account is in the journey rather than based on who they are.

In practice, a PM at Acme Corp first sees TLAs from the team discussing industry challenges, and after 50+ impressions and a few clicks that move them to “Aware” then “Interested,” they start seeing product-focused single image ads with comparison data.

The creative progresses with the account’s engagement level rather than resetting with each campaign.

This is where one of the hardest lessons from the program showed up.

The team initially built a complex scoring model with weighted factors including ad impressions, website visits via reverse IP, content downloads, email opens, and qualitative intent signals.

It was beautiful on paper and completely unworkable in practice.

The problem was that website visitor de-anonymization was too unreliable.

The team set up a separate noindex domain exclusively for ABM landing pages, sent 300 visitors to a page, and Breeze Intelligence (Clearbit’s API) identified exactly one company, which was themselves.

According to a Syft study, Clearbit is actually the most accurate de-anonymization service available, which means that level of accuracy is simply not enough to build a scoring model on. So the team simplified radically.

The scoring model now uses only LinkedIn ad engagement data, which comes directly from the API via ZenABM and is 100% reliable.

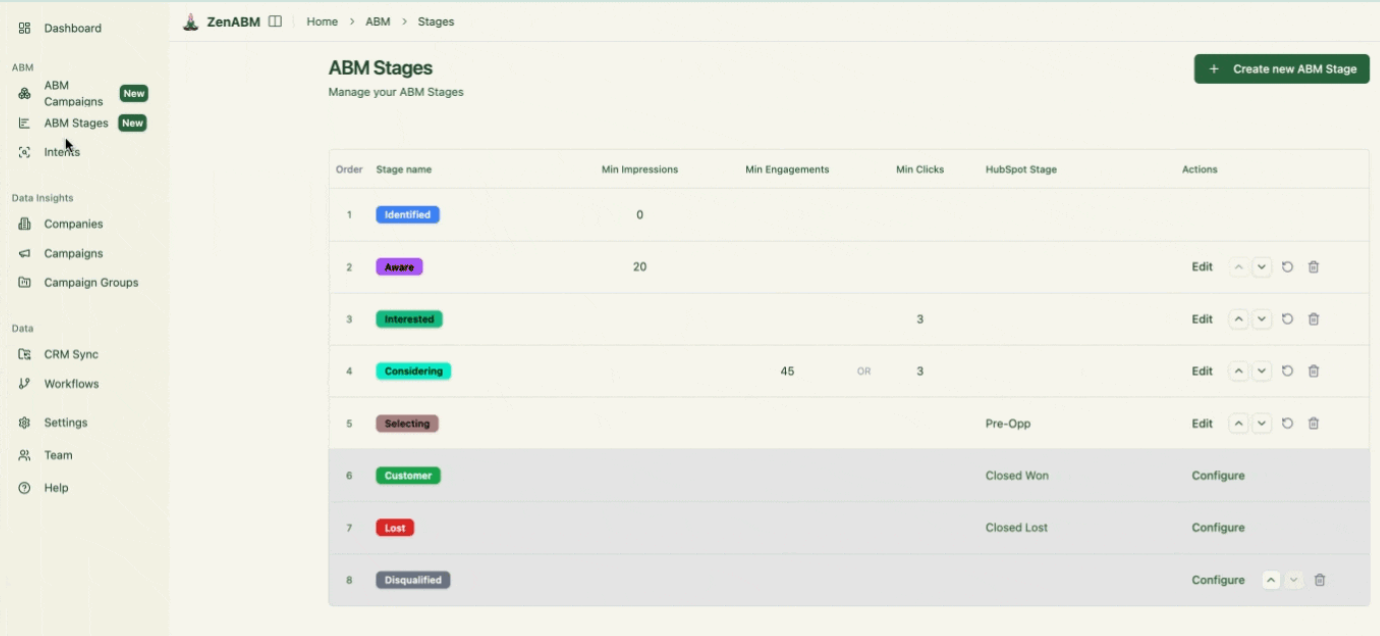

The stages are as follows:

| Stage | Threshold | What Happens |

|---|---|---|

| Identified | Account is on the target list | Sees COLD campaign ads |

| Aware | 50+ ad impressions | Continues seeing COLD ads, tracked for progression |

| Interested | 5+ clicks or 10+ engagements | Moves to WARM campaign, BDR outreach triggered |

| Considering | Booked a demo or started a trial | Sees conversion-focused ads, sales owns the relationship |

| Selecting | Open a deal in CRM | Sales-led, ads support deal progression |

ZenABM automatically assigns these stages based on engagement thresholds and pushes them as company properties into HubSpot.

HubSpot workflows then move contacts between LinkedIn audiences (COLD to WARM) based on stage changes, so the whole system runs on autopilot once configured.

The qualitative data, specifically which campaigns the account engaged with (competitor displacement, product education, specific use case), gets used for BDR outreach personalization rather than for scoring.

This separation of scoring (quantitative and simple) from personalization (qualitative and campaign-specific) was the key insight that made the system work at scale.

Based on the 500+ ads launched over 16 months and the ZenABM 2026 benchmarks, here is what the ad mix looks like per persona at scale:

| Format | Count per Persona | Role | Benchmark Performance |

|---|---|---|---|

| Thought Leader Ads | 5 | Awareness + engagement (cheapest traffic) | 2.68% CTR, $2.29 CPC |

| Single Image Ads | 5 | Product messaging + offer | 0.42% CTR, $13.23 CPC |

| Video Ads | 1-3 | Supporting awareness, build retargeting pools | 0.24% CTR, $15.61 CPC |

| Carousel Ads | 1-3 | Product education, comparison walkthroughs | 0.32% CTR, $13.30 CPC |

| Text Ads | 5 | Always-on brand visibility at low cost | Low CTR but cheap impressions |

That comes to 15 to 19 ads per persona total, but only if your budget supports it.

If you can only afford 10 ads, cut video and carousel first because TLAs and single-image ads are the core formats that drive both engagement data and pipeline. The key operating principles at scale are:

This is where ABM at scale produces a pipeline rather than just impressions.

When accounts move to the “Interested” stage, they enter the outbound workflow.

The system Userpilot ran (covered in detail in the intent-based outbound guide) works as follows:

The personalization that makes this work at scale is not “Dear Jane, I noticed Acme Corp has 500 employees,” which is useless.

It is: “I noticed your team has been looking at content about switching from [competitor]. We just published a comparison that other [industry] teams found useful.”

The intent data from campaign engagement makes this possible without manual research for each account.

As Ali Yildirim emphasized: “Most teams pick LinkedIn ads or outbound email. Running both channels without unified audience data creates three problems: you’re building separate lists with no shared intelligence, timing is random instead of signal-driven, and messaging is disconnected.

The 1:Many ABM combined with intent-led outbound solves all three of those problems simultaneously.

This would be incomplete without covering what did not work.

Here are the mistakes that cost the team time and money.

This was already covered above, but it bears repeating.

The team spent months building a multi-factor scoring model with website visits, email opens, content downloads, and weighted engagement.

It was fragile, unreliable due to the accuracy floor of de-anonymization tools, and impossible to debug when something went wrong.

The simplified model using only LinkedIn engagement data from the API took a weekend to set up and has been running flawlessly for over a year.

The early campaigns had roughly 100 ads across personas, and most of them received fewer than 500 impressions per month.

You cannot optimize an ad with 500 impressions, because the sample size is too small to distinguish signal from noise.

The team cut down to 15 to 19 ads per persona per campaign and immediately saw both better performance and better data quality.

The budget formula of $25 to $32 per ad per day exists because this was learned the hard way.

ABM audiences are small by design, since you are targeting maybe 3,000 to 5,000 people across your target accounts who match your persona filters.

At LinkedIn’s default delivery pace, each person sees each ad 8 to 10 times per month, which means if you do not refresh creative monthly, ad fatigue kills performance before your data has time to compound.

The team now introduces 2 to 3 new creatives per month per campaign as a standing rule.

In the first few months, every account on the list got the same treatment with no mechanism to identify which ones were actually engaging and route them differently.

Once the team implemented account scoring via ZenABM and stage-based campaign routing via HubSpot, cost per opportunity dropped by over 30% because budget started concentrating on accounts showing real signals rather than being spread evenly across the entire list.

| Metric | Result |

|---|---|

| Total accounts targeted | 26,315 |

| Total LinkedIn ad spend | $490,000 |

| Pipeline generated | $5,290,000 |

| Pipeline per $ spent | $10.79 |

| ROAS (closed-won) | 2x+ |

| Ads launched (total over 16 months) | 500+ |

| Core tool stack cost (excl. ad spend) | Under $500/month |

LinkedIn ABM outperformed cold outbound for Userpilot’s higher-ACV deals, generating pipeline faster with fewer people.

Once the system was in place, covering account list, campaign structure, scoring, stage routing, and outbound triggers, results became repeatable and predictable.

That is what ABM at scale should deliver.

The program described in this post targeted 26,315 accounts, spent $490K on LinkedIn ads over 16 months, and generated $5.29M in pipeline at $10.79 per dollar spent. None of that came from a sophisticated enterprise stack or a large team. It came from getting a small number of fundamentals right and then not breaking them as the program scaled.

One channel until the system works. Budget math done before the first ad launches. Scoring kept simple because complex scoring models built on unreliable de-anonymization data will fail quietly and expensively. Creative is refreshed monthly because ABM audiences are too small to ignore fatigue. And intent data from campaign engagement connected to outbound, so the pipeline comes from the ads rather than running parallel to them.

The mistakes in this post, overbuilt scoring, too many ads with too little budget, creative left to fatigue, treating all accounts equally, are not edge cases. They are the default failure modes of first and second-generation ABM programs. The fix in every case was the same: simplify the mechanism, make it reliable, and let it run at scale.

And to know how ZenABM can make ABM a breeze, book a demo now or grab your free 37-day trial.

Some common questions about ABM at scale and their answers:

ABM at scale refers to running account-based marketing programs targeting hundreds or thousands of accounts via a 1:Many strategy, while maintaining relevance through intent-based segmentation, persona targeting, and stage-based content progression.

It is distinct from 1:1 ABM (hyper-personalized to individual accounts) and 1:Few ABM (personalized to small segments). At scale, personalization comes from the system design rather than custom creative per account.

Work backwards from your revenue goal. For a $1M ARR target with $50K ACV, 25% close rate, and typical ABM stage conversion benchmarks, you need approximately 3,000 to 4,000 target accounts.

Use the ZenABM ABM Budget Calculator to run your specific numbers.

The minimum practical size for 1:Many ABM on LinkedIn is around 500 to 1,000 accounts to ensure sufficient audience size for delivery.

Three things make the difference: segment campaigns by intent or topic so each campaign group addresses a specific pain point; use persona-level ad targeting within campaigns so each role sees relevant messaging; and implement account scoring with stage-based content progression so accounts see increasingly product-focused content as they engage more.

The fourth layer is connecting ad engagement to outbound: when accounts show intent signals, trigger personalized BDR outreach referencing the specific topics they engaged with.

To run a meaningful 1:Many program targeting one persona, you need at least $8,000 to $10,000/month.

This supports approximately 10 to 15 ads getting 3 to 4 clicks per day each at $8 average CPC.

Below this threshold, budget dilution makes it impossible for individual ads to gather enough data to optimize. For two personas, double it.

For the full orchestration stack described above, the team spent $30K/month at peak.

No. Based on Userpilot’s experience, website de-anonymization match rates are too unreliable for account scoring. The team tested Breeze Intelligence (Clearbit) and it identified 1 out of 300 known visitors to their ABM landing pages. Use LinkedIn ad engagement data from the API via tools like ZenABM for scoring, since it is 100% reliable. De-anonymization tools such as Vector and RB2B are useful for the identification layer, meaning knowing which companies visit your website, but they should not be the foundation of your scoring model.